In the modern healthcare landscape, organizations are collecting vast amounts of data from various sources, including electronic health records (EHRs), medical devices, and clinical systems. These sources contain a wealth of valuable clinical data, often stored in proprietary formats with non-standard codes and structures. To effectively harness this data, ETL in the healthcare industry plays a pivotal role.

The diverse nature of data sources calls for restructuring and transforming medical data into a common format, harmonizing terminologies, and establishing optimal links to other data sources. In this article, we will explore the importance of ETL in healthcare, its challenges, and best practices for implementing an ETL process in clinical data management

What is ETL in healthcare?

ETL stands for Extract, Transform, Load, which refers to a process used in data integration and management. ETL is a process of extracting data from one or more sources, transforming it to fit a specific format or structure, and loading it into a target database or data warehouse. The primary goal of ETL is to make data usable for analysis, reporting, and other business intelligence purposes.

ETL projects in healthcare domain are most often implemented alongside healthcare data mining software, particularly when transforming data from one format to another. For example, the source database stores data in an HL7 v2 format, but to be used for other purposes, it needs to be converted to the FHIR® standard.

Three main stages of ETL

The clinical ETL process involves three main steps, each of which plays a crucial role in preparing data for analysis and reporting. Here’s a brief description of each stage:

Extraction

In this stage, raw data is extracted from disparate sources, such as databases, files, or APIs. Data extraction in healthcare can be a complex process, as data may be stored in different formats, structures, or locations.

For example, in healthcare, data may be stored in different EHR systems or clinical applications, each with its own format and schema. The goal of the extraction stage is to gather all the relevant data needed for analysis or reporting.

Transformation

Once the data is extracted, it needs to be transformed into a format that is consistent and usable for analysis. This stage involves cleaning and validating the data and converting it into a format that can be easily integrated with other data sources.

Data cleaning in particular is an essential step in ensuring the quality of data by identifying and rectifying errors and inconsistencies within datasets. These issues can occur within standalone data collections like files and databases due to factors such as misspellings, missing information, or invalid data.

Transformation may include tasks such as data mapping, data profiling, data enrichment, and data consolidation. The goal of the transformation stage is to prepare the data for analysis or reporting and to ensure that it is accurate, complete, and consistent.

Loading

After the data has been extracted and transformed, it is loaded into a target database or data warehouse. This stage involves inserting the transformed data into a structured database, where it can be easily accessed and analyzed.

Loading may include tasks such as data aggregation, data summarization, and data indexing. The goal of the loading stage is to create a centralized repository of data that can be used for analysis, reporting, and other business intelligence purposes.

Streaming ETL

Unlike the traditional batch ETL, which processes large volumes of data in scheduled intervals, streaming ETL is a process of continuously processing and analyzing data as it is generated in real-time.

With streaming ETL, data is collected as soon as it is generated and is processed in small chunks, often referred to as micro-batches. Each micro-batch is transformed and loaded into a destination system, such as a data warehouse or a real-time dashboard. This allows for near real-time analysis and insights to be obtained from the data.

Streaming ETL is commonly used in scenarios where data needs to be analyzed and acted upon quickly. Some popular tools for streaming ETL include Apache Kafka, Apache Flink, and Apache Spark Streaming.

Streaming ETL for healthcare has many applications, for example, it can be used to continuously process patient data generated from medical devices such as EKGs, blood glucose monitors, and pulse oximeters. This data can be transformed, loaded and analyzed in real-time to monitor patients’ vital signs, detect anomalies, and alert healthcare providers of critical changes in a patient’s condition.

ETL vs. ELT

ELT stands for Extract, Load, Transform. In the ELT approach, data is first extracted from the source systems and loaded into a target database or data lake. The ELT approach is often used when organizations need to perform complex transformations that require more flexibility and processing power than can be achieved using traditional ETL tools.

The main difference between ETL and ELT is the order in which the transformation step is performed. In ETL, the transformation is performed before the data is loaded into the target system, while in ELT, the data is loaded first and then transformed. The choice between ETL and ELT depends on the specific needs of the organization and the complexity of the HL7 data integration process.

Challenges of ETL in healthcare

Let’s take a look at some of the common ETL challenges and issues you have to be aware of when implementing an ETL process for healthcare data integration:

Compatibility between source and target data

Healthcare systems often lack uniformity in data entry, leading to inconsistencies in fields, vocabularies, and terminologies across different sources. This lack of compatibility poses a challenge when integrating data from multiple systems. Incompatibility issues can result in information loss if the target system cannot effectively integrate the source data.

Data quality

Health data is susceptible to data quality issues such as misspellings, value discrepancies, database constraint violations, and missing or contradicting information. Ensuring the accuracy and reliability of the data obtained through ETL processes is crucial to provide clear, thorough, and correct information to end users. Data quality issues must be addressed to maintain the integrity of the integrated data.

Scalability

Healthcare data is typically high in volume, and the source systems undergo constant updates and operational changes. Designing and maintaining ETL processes that can handle the growing data size and workload while maintaining reasonable response times becomes a challenge. Scalability issues need to be addressed to ensure efficient data integration and processing.

Skill disparity

Uneven skill disparity within teams can prove to be a significant challenge since developers have to understand both healthcare data and software development, which requires a diverse skill set. Clinical experts are more familiar with standard terminologies like SNOMED CT and LOINC, while technical experts focus on healthcare infrastructure communication and software engineering. This divide in expertise can make it difficult to collaborate effectively and slow down the ETL process.

Regulatory compliance

Healthcare organizations must comply with various regulations, such as HIPAA, which can impact how data is collected, stored, and managed. ETL processes must be designed with regulatory compliance in mind to avoid potential legal and financial risks.

Best practices for implementing ETL in healthcare

To ensure the optimal performance of your ETL process, it’s crucial to follow established best practices that have been refined through experience. We’ve outlined some approaches that can significantly enhance your ETL for clinical data integration workflow:

1. Leverage parallel processing

By leveraging the automation capabilities of ETL processes, you can execute extract, transform, and load operations simultaneously through parallel processing. This approach enables you to perform several iterations concurrently, increasing your ability to work with data rapidly and minimizing the time required to complete the task. Your infrastructure will likely be the limiting factor for the number of simultaneous iterations that can be performed.

2. Utilize data caching

Data caching involves storing frequently used data in a readily accessible location, such as a disk or internal memory. By pre-caching data, you can significantly improve the overall performance of your ETL process. However, bear in mind that this approach will require ample RAM and free space. Moreover, the cache function is crucial for supporting parallel processing and parallel data caching.

3. Perform regular maintenance

Regular maintenance and optimization of data tables are critical because smaller tables can facilitate faster and more straightforward ETL processes. To streamline your ETL pipeline’s performance, it is often recommended to split large data tables into smaller ones.

4. Implement effective error handling

The error handling mechanism is essential for ETL processes. Ensure the mechanism captures the project and task names, error number, and description. The monitoring page should have all the necessary information about the task’s progress. When an error occurs and it interrupts the entire task, analyzing the monitoring page will help you quickly resolve an issue. It’s recommended that you log all errors in a separate file, but pay particular attention to those that impact your business logic.

5. Monitor daily ETL processes

Monitoring ETL processes is crucial for ensuring the solution’s quality. In most cases, data validation and checking its flow between databases is sufficient enough for monitoring ETL processes. To achieve proper monitoring of the entire system, we recommend:

- Monitoring external jobs that deliver data from outside

- Tracking the time spent executing ETL processes

- Analyzing your architecture to identify potential threats and problems in the future

- Tracking standard performance metrics like response time, connectivity, etc.

Conclusion

ETL is an essential tool for healthcare organizations looking to optimize their data management and analytics capabilities. ETL enables healthcare professionals to seamlessly integrate data from multiple sources, clean and transform the data, and load it into a centralized system for analysis and reporting.

By leveraging ETL, healthcare organizations can gain insights into patient care, operational efficiency, financial performance, and other critical areas. This can lead to improved decision-making, better patient outcomes, and ultimately, lower costs.

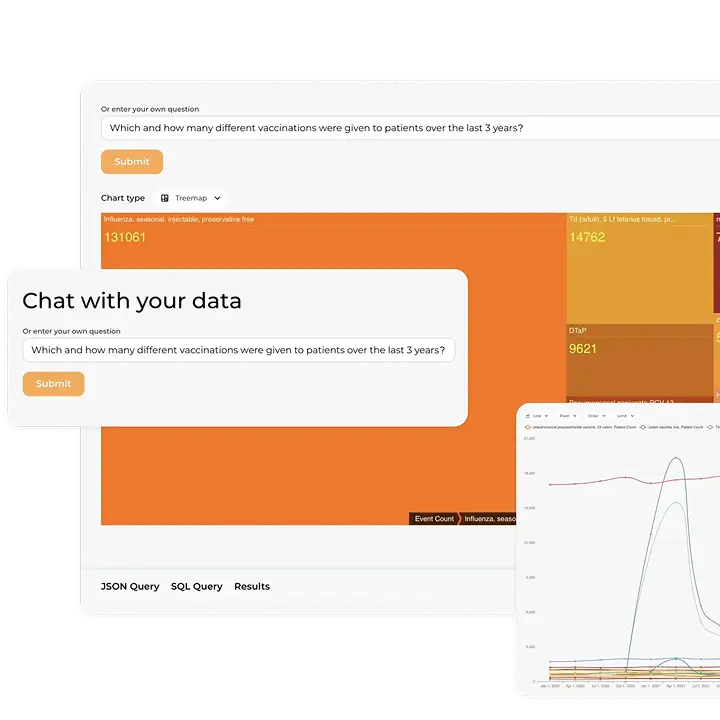

If you are looking for a flexible and scalable FHIR® server to support your ETL processes, look no further than the Kodjin FHIR® Server. Our team has extensive experience working on healthcare projects that utilize streaming ETL processes and we specialize in optimizing performance for these workflows. Our expert team of data engineers can help you manage your data seamlessly and efficiently. Contact us to see how our services can benefit your healthcare project!

FAQ

How can ETL optimize healthcare data workflows and improve decision-making?

ETL can optimize healthcare data workflows by extracting data from diverse sources, transforming it into a standardized format, and loading it into centralized systems. This streamlined process enhances data accessibility and accuracy, leading to more informed decision-making.

Are any specific tools or technologies recommended for ETL in the healthcare industry?

There are several tools suitable for ETL in the healthcare industry, such as Talend, Microsoft SSIS, and Iguana. Kodjin Interoperability Suite also offers a FHIR® Mapper tool, which utilizes customizable Liquid templates to transform legacy data formats into FHIR®. The choice depends on the specific needs, scale, and existing infrastructure of the healthcare organization.

How can organizations ensure data security in healthcare and compliance during the ETL process?

Healthcare organizations can ensure data security and compliance during the ETL process by encrypting sensitive data, enforcing strict access controls, conducting regular audits, and adhering to regulatory standards like HIPAA.