Big data in healthcare is a valuable source for identifying patterns and trends in health information that healthcare professionals can use to help patients solve their health issues. No wonder the industry turns to advanced technologies like cloud computing, making it possible for clinicians to work collaboratively on medical records, see a broader picture of a patient’s health, and use the world data for health research to their patients’ best advantage.

Even though the internet is replete with articles about how cloud computing can save stakeholders money, several big data storage challenges can lower the efficiency of big data collection and make this process complex and costly. In this article, we’ll discuss these challenges and suggest the right solution for big data cost reduction and optimizing storage costs while ensuring security and efficiency.

The Motivation Behind Big Data Integration

Reducing healthcare costs

Global healthcare digitalization and the advancement of data storage revealed the potential of using big data in healthcare: the research by McKinsey & Company estimates that implementing innovative healthcare pathways facilitated by big data in healthcare could lead to annual cost savings from $300 billion to $450 billion.

Embracing value-based care

In summary, big data plays a crucial role in aligning healthcare practices with value-based care principles by serving as a foundation for data-driven decision-making, patient risk identification, outcome prognosis, and other processes that need to be based on a comprehensive analysis of large datasets.

Harnessing unstructured data

According to healthtechmagazine.net, 80% of healthcare data is unstructured. However, it’s crucial to effectively organize and analyze vast healthcare data to gain valuable insights for optimal outcomes. Using big data technologies such as predictive analytics, AI and big data in healthcare allow uncovering patterns and trends within the data, offering the potential to predict future events and save numerous lives. Let’s dig into use cases for more details on how big data transforms healthcare.

Healthcare Big Data Use Cases

The successful use of predictive analytics for Medicare and Medicaid fraud detection

Medicare fraud is approximated to result in an annual financial toll of $60 billion. Hence, addressing Medicare and Medicaid fraud requires robust detection methods. The study of big data fraud detection using multiple Medicare data sources reveals the advantages of leveraging the Centers for Medicare and Medicaid Services (CMS) big data Medicare claims datasets for effective research.

It assesses fraud detection by combining multiple datasets and using advanced processing and machine learning. The study demonstrates positive outcomes in Medicare fraud detection, revealing that the combined dataset, utilizing logistic regression, yielded the best overall score at 0.816, closely followed by the Part B dataset with LR at 0.805, indicating robust fraud detection performance across different Medicare parts.

Novartis: global healthcare company integrates a distributed processing system for big data workloads

Novartis is a company that collaborates with big tech companies such as Microsoft, ConcertAI, and Amazon to accelerate the development of AI-driven solutions. For harnessing the power of big data, artificial intelligence, machine learning, and predictive analytics, the company employed a combination of Hadoop and Apache Spark, allowing efficient integration and analysis of diverse datasets. The exemplary outcome of this approach lies in its ability to propel groundbreaking research in technologies such as Next-Generation Sequencing (NGS), serving as a testament to the transformative influence of big data in advancing the frontiers of healthcare.

Also, the company is actively advancing its capabilities in data science and artificial intelligence through the data42 program: using an extensive dataset of approximately 2 million patient years of data, the company seeks to foster innovation and explore potential transformations in drug development. More than 2,000 clinical studies were efficiently integrated into a unified platform during this project. Additionally, they have examined numerous machine-learning models, aiming to uncover new correlations between drugs and diseases, thereby playing a role in improving healthcare.

These examples illustrate the influence of big data in healthcare, spanning from thwarting fraud to collaborative data analysis and research. The pervasive influence of big data is not merely theoretical but substantiated by these examples of practical implementation. However, the storage of big data can be pretty expensive, given several factors.

Healthcare Big Data Storage Challenges

- Architectural complexity: The strategic integration of big data in healthcare demands a resilient architectural framework. This framework spans data collection, processing, storage, and serving various analytical purposes, including machine learning and dashboard creation. The intricacies entailed in constructing and sustaining such an architecture introduce layers of complexity to the overarching storage landscape.

- Data ingestion: Maintaining data quality while gathering big data into a data processing system is crucial for accurate insights. Also, inconsistencies and variations in formatting across diverse sources add complexity and expenses. Synchronizing data from multiple sources requires meticulous efforts to ensure uniformity and efficient transfer, contributing to the intricacies of the process. Additionally, real-time ingestion demands scalability, which not every system is ready to offer.

- Real-time streaming: The continuous generation of massive data streams from diverse sources, characterized by volume, variety, velocity, integrity, and value, demands immediate processing for timely analytics. Real-time stream processing in healthcare involves intricate operations, such as data cleaning, query processing, stream-stream join, stream-disk join, and data transformation. The need for accuracy and limited resources in delivering real-time reactions and decisions adds to the complexity of real-time streaming.

- Security and compliance: The sensitive nature of healthcare data containing patient information mandates robust measures to uphold confidentiality while ensuring its integrity and interoperability. The intricate regulatory landscape represented by standards like HIPAA makes it more challenging to design regulatory-compliant storage solutions. Safeguarding patient privacy and preventing unauthorized access or data breaches necessitate complex security protocols.

- Cost implications: The advantage of big data in healthcare, its voluminous nature, contributes to the costliness of storage. As data accumulates rapidly, storage infrastructure, cloud services, and maintenance costs escalate. Balancing the need for extensive data storage with cost-effectiveness becomes a delicate task in this scenario.

Overcoming Healthcare Big Data Storage Challenges

Leveraging Solutions with Scalable Microservice Architecture

The strategic integration of big data demands a resilient architectural framework. This framework, encompassing data collection, processing, storage, and analytical purposes, introduces layers of complexity. Constructing and sustaining such an architecture requires meticulous planning. To overcome this challenge, healthcare institutions should invest in modular architectures that allow scalability and flexibility.

How can scalable microservice architectures help?

Embracing a microservices approach allows for unsurpassed scalability. As a result, the system can efficiently scale horizontally to meet increasing needs as data volumes grow.

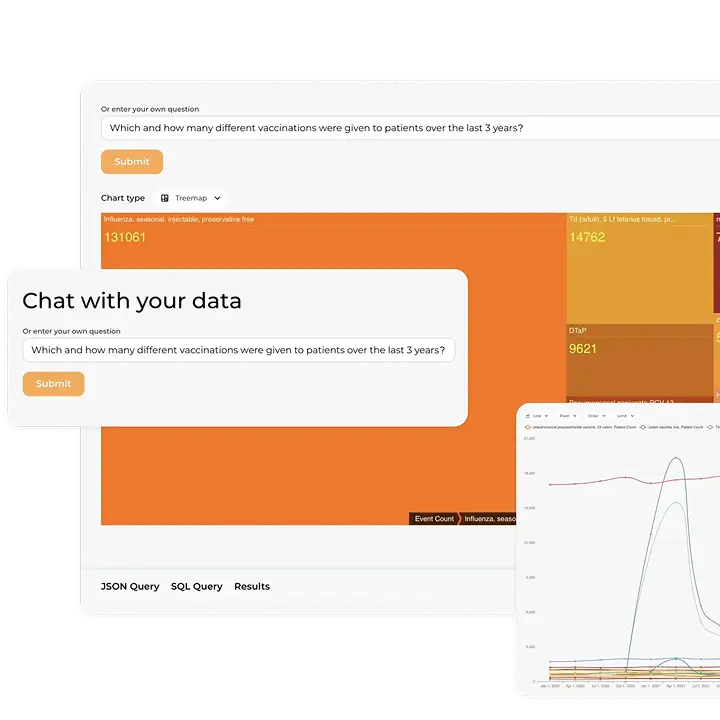

The Kodjin FHIR® Server is built with a scalable microservice architecture tailored for high-load systems, providing an unparalleled capacity to grow and ensuring it can handle the demands of big data storage in healthcare.

Mapping healthcare data

Synchronizing data from multiple sources requires ensuring the uniformity of big data. Overcoming these healthcare data storage challenges involves:

- Adopting standardized data formats.

- Implementing data validation checks.

- Leveraging advanced data integration tools like the ones in the Kodjin Interoperability Suite.

Apache Kafka and FHIR® Subscription for real-time streaming

FHIR® Subscriptions is an option FHIR®-first systems can use for standardized real-time updates by subscribing to specific events or resources to ensure immediate data availability. This standardized approach streamlines communication, integrating smoothly into healthcare ecosystems.

Combined with Apache Kafka, chosen by over 80% of Fortune 100 companies for streaming data, FHIR® Subscriptions allow stakeholders to excel in handling big data streams. Kafka is a central hub for collecting and buffering data from multiple sources, facilitating seamless interaction between data-producing and consuming services. It is designed to process high-volume data, making it a perfect solution for big data real-time streaming.

Leveraging the power of event-driven architecture over Kafka, Kodjin ensures seamless real-time data processing and the ability to handle big data streams efficiently.

Ensuring regulatory compliance in healthcare

Addressing security and compliance concerns involves understanding the value and importance of data privacy. Robust measures are crucial to ensure confidentiality, integrity, and interoperability, given the complex and ever-changing regulatory landscape in healthcare, not to mention the need to avoid the consequences of failing compliance with standards like HIPAA.

Using out-of-the-box HIPAA and ONC-compliant solutions like our FHIR® Server or designing storage solutions that adhere to regulatory requirements safeguards patient privacy. It prevents unauthorized access to healthcare data repositories and allows for investing money in the project instead of covering monetary penalties for non-compliance.

Reduce cloud costs in healthcare

According to recent survey data, 70% of healthcare businesses have embraced cloud computing. The wide adoption of cloud solutions makes big data cloud cost reduction for healthcare a more pressing problem than ever before. Apart from security concerns, the voluminous nature of information contributes to the cost of using a cloud for healthcare data storage.

The Kodjin FHIR® Server addresses the challenge of big data storage cost optimization in healthcare with help from the following tactics:

- Low-code approach: The server can be supported and configured by business technical analysts without technical skills; hence, there is no need to invest in expensive specialists to maintain it.

- Built on Rust: Rust ensures exceptional performance (check out the high performance of the Kodjin FHIR® Server) and provides unique memory and concurrency safety attributes at minimal execution speed costs. Rust ensures a trouble-free operational environment, requiring fewer resources and contributing to healthcare big data storage cost optimization.

- No vendor lock-in: The FHIR® Bulk API ensures robust data mobility through advanced bulk export and import capabilities, enabling extensive health dataset downloads in a single request and minimizing the system load. Check out our article about FHIR® Bulk API to learn how to extract and integrate data without vendor lock-in.

By implementing smart storage solutions, organizations can ensure a secure, efficient, and cost-optimized approach to managing the wealth of information critical to modern healthcare. Moreover, these storage strategies enable advanced data mining in healthcare, allowing organizations to extract actionable insights from vast datasets to improve patient outcomes, optimize operations, and support research initiatives. This approach addresses the challenge of big data and low-cost data storage in healthcare, aligning with the industry’s standards and data protection requirements.

Final Thoughts

Healthcare big data storage cost optimization is important for harnessing unstructured data for informed decision-making and embracing value-based care. Investing once in the innovative data storage solution, stakeholders can use the power of big data to combat fraud and advance collaborative research initiatives. Collaborate with Edenlab to ensure the scalability of your big data repository while protecting sensitive patient data from unauthorized access and ensure big data storage cost optimization in healthcare.